How To Calibrate a Pressure Transducer

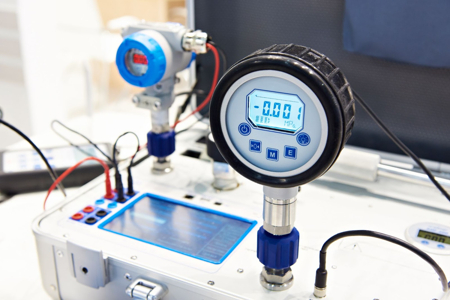

Figure 1: Reference pressure transducer in a portable calibrator

Pressure transducer calibration ensures the accuracy and reliability of the instrument. The transducer measurements could be incorrect without proper calibration, leading to potential mistakes or inaccuracies in sensitive applications. This article is a step-by-step guide on how to calibrate a pressure transducer. Read our pressure transducer article for information on the working and type of pressure transducers.

Table of contents

- Pressure transducer calibration equipment

- Pressure transducer calibration process

- Pressure transducer calibration curve

- How often to calibrate a pressure transducer

- Transducer calibration standards and certifications

- FAQs

View our online selection of pressure transducers!

Pressure transducer calibration equipment

Pressure transducer calibration equipment typically consists of a pressure generator, a reference pressure transducer, and calibration software.

- Pressure generator: A pressure generator produces the required pressure for calibration. Depending on the specific calibration requirements, it can be a manual pump, an automatic pump, or a deadweight tester.

- Reference pressure transducer: The reference pressure transducer has a precisely known performance characteristic. This is used as a comparison point to calibrate the pressure transducer under test.

- Calibration software: Calibration software records and analyzes the data produced during calibration. This software often includes features for managing calibration schedules, storing calibration records, and generating reports.

Other tools like pressure gauges and multimeter are used during the process to measure pressure and electrical signals, respectively.

Also, there are compact devices like portable calibrators (Figure 1) to verify and adjust the accuracy of pressure transducers. They generate known reference signals applied to the instrument being calibrated. By comparing the instrument's readings to the reference signal, the user can make adjustments to ensure accurate measurements in various field and industrial settings.

Pressure transducer calibration process

The calibration procedure typically involves applying known pressures to the transducer and comparing the output values to their corresponding input values. Adjustments are made until the output matches the input accurately.

- Set up the transducer: Place the transducer in a stable environment free from vibrations or movements.

- Pre-calibration preparation: Apply pressure to the transducer, typically about 90% of its maximum capacity. For instance, if the maximum pressure it can handle is 10 bar, pressurize the device to around 8-9 bar. Keep this pressure steady for approximately thirty seconds, and then release the pressure. This helps the transducer perform better during calibration. Also, set the transducer baseline to zero before starting the calibration.

-

Calibration:

- Increase the pressure applied to the transducer in small increments.

- Use a pressure gauge to record the applied pressure and a multimeter to measure the corresponding output voltage at each increment. Repeat until the transducer's full scale is reached.

- It's important to increase and decrease the pressure in the same increments to capture the behavior of the transducer in both directions.

- At each test point, give it time to settle down and stabilize (about 30 seconds). Use more test points if needed to improve the calibration accuracy.

- Checking and recording: Compare the readings with a reference device. Any mismatches could be due to calibration drift (the gradual change in measurement accuracy of an instrument over time), faulty equipment, environmental conditions, procedural errors, or reference device issues. Document the results for future use.

Pressure transducer calibration curve

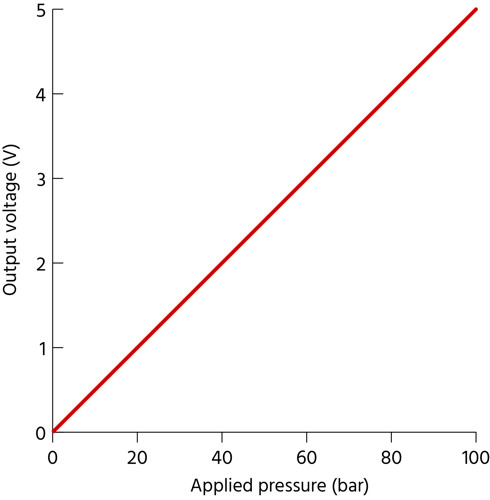

A calibration curve for a pressure transducer shows the relationship between pressure input and electrical signal output. This curve is established by applying known pressure inputs, recording output signals, and plotting these data points on a graph. The curve can then be used to predict outputs from given inputs.

Calibration curves may be linear or non-linear, signifying different relationships between input and output.

- A linear calibration curve shows a direct, proportional relationship between input and output.

- A non-linear curve indicates a more complex relationship where output doesn't change proportionally with input.

Factors like temperature changes, mechanical stress, and device age can influence the accuracy of the calibration curve. Regular calibration checks help maintain accuracy.

Example

Consider calibrating a pressure transducer with a full-scale output of 5V and pressure range from 0 to 100 bar. Suppose the input pressure is incremented in steps of 20 bar for this experiment (Table 1). The graph in Figure 2 shows the variation of the output electrical signal with linear input pressure change. However, the relation can also be non-linear (the graph will not be a straight line) for various reasons, such as the mechanical properties of the materials used in the transducer, the transducer design, and the environmental conditions. For example, temperature changes can cause the materials within the transducer to expand or contract, leading to non-linear outputs.

Table 1: An example showing the variation in applied pressure and output voltage generated

| Applied pressure (bar) | Output voltage (V) |

| 0 | 0.00 |

| 20 | 1.00 |

| 40 | 2.00 |

| 60 | 3.00 |

| 80 | 4.00 |

| 100 | 5.00 |

Note: The values given in Table 1 is only for informative purpose and may not comply with the results of actual experiments.

Figure 1: Pressure transducer calibration curve. The input pressure is plotted along the X-axis, and the output signal is plotted along the Y-axis.

How often to calibrate a pressure transducer

The calibration frequency of a pressure transducer depends on several factors, like:

- Local, national, or environmental regulations

- The reason for calibration (quality, safety, or standard maintenance)

- Process and ambient conditions

For instance:

- A pressure transducer installed inside a closed environment with stable conditions can be calibrated every 4 - 6 years. However, if installed outside, it should be calibrated every 1 - 4 years, depending on ambient conditions.

- Reduce the calibration interval to half if the pressure transducer has a remote diaphragm seal. This is due to the increased mechanical stress from process or ambient temperature fluctuations and potential physical damage to the diaphragm/membrane.

Transducer calibration standards and certifications

Standards

Calibration standards for pressure transducer calibration are set values used to compare the device's measurements during calibration. Bodies like ISO or NIST define these standards. Pressure transducer calibration should be performed by a laboratory accredited to ISO/IEC 17025, ensuring qualified personnel accurately perform the calibration.

Certifications

Certification is the formal recognition that a calibration laboratory has met the requirements and is competent to perform calibration tasks. Key certifications include:

- ISO 9001: Establishes criteria for a quality management system, ensuring consistent performance and service.

- ISO/IEC 17025: Specifies the general requirements for the competence of testing and calibration laboratories.

- NIST Traceable Certification: Assures pressure transducer calibration as it confirms that the measurements are accurate per the National Institute of Standards and Technology standards.

Read our pressure transducer selection article for more details on how to select a transducer for an application.

FAQs

How do you calibrate a pressure transducer?

Apply known pressures using a reference standard, record transducer readings, compare the input and output values, and adjust the transducer's output if it deviates from the expected values.

Why must the pressure transducer be calibrated?

Calibration ensures accurate pressure measurements by correcting any deviations in transducer output, maintaining reliability and precision in various applications.